AI Governance for Boards and a Practical Guide for Leaders Managing the Twin AI and Sustainability Transitions

TL;DR

AI is now a core board issue, shaping strategy, risk and ESG performance, it can’t be left to IT or innovation alone.

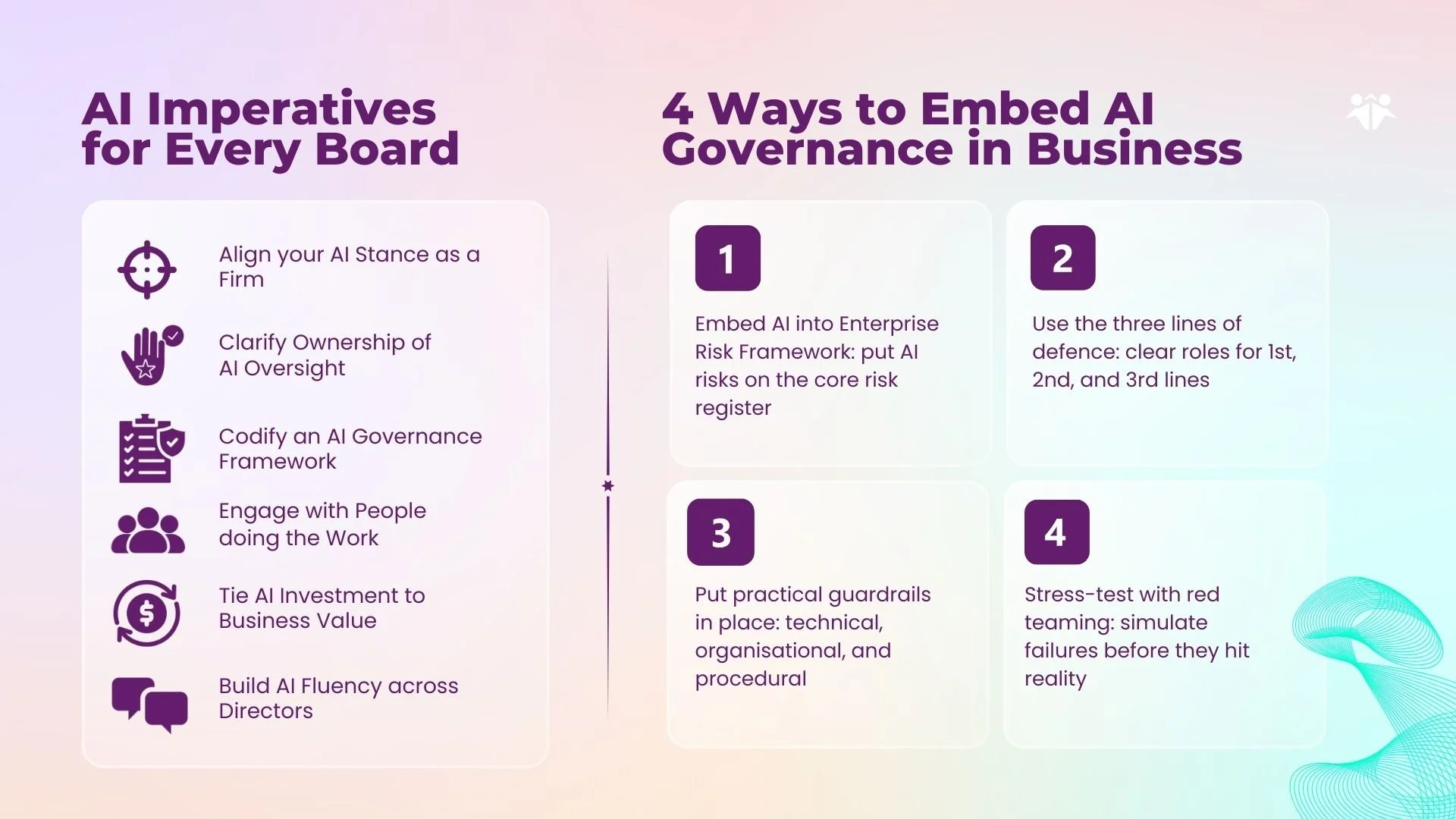

Every board should align on its AI stance, clarify oversight, codify a governance framework, engage with practitioners, tie AI to value (including ESG) and build director fluency.

Embedding AI into your Enterprise Risk Management framework, three lines of defence, guardrails and red teaming efforts keeps governance practical and credible.

AI Governance for Boards and Sustainability Focused Leaders

AI governance now belongs squarely on the board agenda, not just in the IT or innovation committee. As AI moves into core decisions about capital allocation, customers, supply chains and people, it creates a mix of strategic opportunity, enterprise risk and ESG impact that directors cannot delegate away.

For sustainability‑focused leaders, this shift cuts both ways. AI can help you make better climate and ESG decisions, but it can also amplify biases, greenwashing, privacy breaches and environmental harm if it is deployed without clear guardrails. Effective ESG is increasingly understood to include AI governance: boards are expected to treat AI‑related risks and impacts with the same discipline they apply to financial, operational and climate risks.

This article offers a practical starting point for boards and executive teams who want to get ahead of that curve. It lays out six core imperatives that every board should tackle on AI, shows how the board’s role shifts depending on your organisation’s AI stance, and then walks through four concrete ways to embed AI governance into how the business actually works.

Why AI Governance Belongs on the Board Agenda

AI shapes the core levers boards are responsible for: strategy, risk and long‑term value. It is embedded in pricing, underwriting, supply chains, customer journeys and internal controls, which means AI failures can quickly become earnings, reputation and regulatory events that sit squarely within directors’ fiduciary duties.

At the same time, regulators and investors are making AI oversight increasingly explicit. Supervisors are signalling that AI‑related bias, privacy breaches, greenwashing and “AI‑washing” are enforcement priorities, while investors expect boards to treat AI as part of their ESG and risk responsibilities rather than a side project for IT. This is why many companies are now updating committee charters so that AI oversight sits clearly with the full board or with designated audit, risk, technology or ESG committees.

For sustainability‑focused leaders, the stakes are even higher. AI is already used in climate‑risk models, ESG ratings, net‑zero planning, supply‑chain due diligence and workforce analytics, all of which influence disclosures and stakeholder trust, and are increasingly recognised as par of the boards role in AI and Sustainability. Poorly governed AI in these areas can undermine hard‑won ESG progress; whereas well‑governed AI can make your sustainability strategy more credible, auditable and effective.

Against this backdrop, treating AI governance as a “nice to have” innovation topic is no longer tenable. Boards need a clear view on where AI creates value, how it changes the organisation’s risk profile, and what guardrails, assurance and accountability are in place.

The rest of this article turns that expectation into a practical agenda: six imperatives every board should act on, how the board’s role shifts by AI stance, and four ways to embed AI governance into the fabric of the business.

Six AI Imperatives Relevant for Every Board

𝟙 Align on your AI posture, and review it (at least) annually

Boards need a clear, shared view of how bold or cautious the organisation intends to be with AI, and how that posture aligns with strategy, risk appetite and stakeholders’ expectations. Without this, AI initiatives proliferate in pockets, creating hidden risk and fragmented impact. A defined posture, revisited at least annually, anchors decisions about where AI is appropriate, where it is not, and how quickly to scale.

From an ESG standpoint, posture also signals how the company will balance AI’s benefits with its environmental, social and governance footprint. For example, boards should be explicit about how they will manage AI’s energy use, potential workforce disruption and exposure to bias or greenwashing risk as adoption accelerates.

𝟚 Clarify ownership of AI oversight, both within the board and management team

AI governance fails quickly when no one is clearly accountable. Boards should decide whether AI oversight sits with the full board or specific committees (risk, audit, technology, ESG), and ensure there is a named executive owner at management level. Clear ownership avoids gaps where critical AI decisions fall between IT, risk, legal, sustainability and the business.

This is increasingly important because investors and regulators now view AI governance as part of overall corporate and ESG governance quality. When responsibility is diffuse, AI‑related bias, privacy breaches or misleading AI‑enabled disclosures can slip through, undermining both ESG reporting and stakeholder trust.

𝟛. Codify an AI governance policy framework

Directors should expect management to move beyond principles to a concrete AI governance framework: policies, standards and processes that cover data, model lifecycle, accountability, documentation and escalation. This framework should integrate with existing risk, compliance and data‑governance structures rather than sit on an island in the innovation function.

Codification is also what makes AI governance visible in ESG terms. Policies on responsible AI, human‑rights impacts, environmental footprint, workforce impacts and third‑party AI use allow the company to disclose credibly how it is managing AI‑related ESG risks and opportunities. In many jurisdictions, this level of transparency is rapidly becoming an expectation from investors, ratings agencies and regulators.

𝟜. Engage more broadly (and frequently) with those doing the work

Good AI oversight requires boards to hear directly from the people designing, deploying and being affected by AI, not just from a single sponsor or vendor. Directors should seek regular, concise briefings from cross‑functional teams spanning technology, risk, sustainability, operations and HR, as well as from worker representatives where appropriate. This helps the board understand how AI is really being used, what is working and where frontline concerns are emerging.

For ESG‑focused boards, this engagement is a way to surface social and ethical risks early. It opens the door to input on fairness, inclusion, job quality, safety, environmental impact and community effects of AI deployments that may not show up in traditional dashboards. These insights can then feed back into both strategy and ESG reporting, rather than becoming a reputational issue after the fact.

𝟝. Tie AI investment to business value

Boards should insist that AI spend is treated like any other strategic investment: linked to clear value hypotheses, defined success metrics and disciplined portfolio management. This means focusing on a small number of high‑impact use cases where AI can demonstrably improve efficiency, resilience, revenue or risk management, rather than chasing every new tool.

𝟞. Build AI fluency across directors

Finally, boards cannot govern what they do not understand. Directors do not need to become data scientists, but they do need enough AI fluency to ask the right questions about data, models, risks, controls and ESG implications. Targeted board education, scenario‑based workshops and exposure to real use cases can quickly raise the baseline.

Source: McKinsey, Boards in the Age of AI Webcast, and Oxford AI for Business Professionals

How the Board's "Challenger" Role Varies with AI Stance

As boards clarify their organisation’s AI stance, their traditional role as challenger does not disappear, but it does change shape. The questions a board should ask, the risks it must probe and the ESG issues it needs to surface will look different for a cautious Pragmatic Adopter than for an Internal Transformer rewiring the whole operating model with AI.

The board should …

Pragmatic Adopter

For Pragmatic Adopters, the board’s challenge is to ensure the organisation is not sleepwalking into AI risk while “doing a little bit of AI on the side.” Directors should push for clear visibility of where AI is already in use, whether basic guardrails are in place, and how management will pivot if the competitive landscape shifts faster than expected

Business Pioneer

In Business Pioneer organisations, AI is central to new products, services or business models, so the board must challenge whether the company is making the right strategic bets and has the leadership, capital and resilience to sustain them given how fast traditional moats can erode. Directors should scrutinise portfolio choices, unit‑economics, scaling assumptions and dependency on unproven technologies or partners.

Functional Reinventor

Functional Reinventors use AI to deeply transform specific functions, for example, finance, supply chain, manufacturing or sustainability operations. The board’s challenger role here is to ensure coherence across initiatives, avoid a patchwork of disconnected tools and prevent over‑reliance on vendors or shadow IT.

Internal Transformer

Internal Transformers treat AI as a lever to rewire the whole operating model, often with automation, agents and data products embedded across business units. The board’s challenge is to oversee this at‑scale while pushing for structural change: breaking silos, enabling cross‑functional workflows and ensuring that governance and controls keep pace with transformation. Boards also need to probe organizational resilience: can the company re-skill people fast enough, realign incentives, adapt governance and harden systems as AI becomes integral to day‑to‑day operations?

4 Good Practices for AI Implementation

So what does good AI implementation actually look like in practice? Let's walk through some of the key components, from risk frameworks to guardrails, red teaming, and the three lines of defense.

Embed AI into Your Enterprise Risk Management Framework

AI should not sit in a separate “innovation” risk bucket. It cuts across the same categories boards already oversee: reputation, conduct, compliance, operational resilience, cyber, legal and strategy. Bias, opaque decision‑making, job displacement, model failure, over‑reliance on vendors, GDPR breaches, environmental footprint and IP disputes are all AI‑related risks that belong on the core risk register alongside other strategic and operational threats.

The governance challenge is to weave AI into existing enterprise risk management (ERM), not bolt on a parallel process. AI‑related risks should be identified, assessed and monitored through the same mechanisms you already use for financial, operational and compliance risks: risk registers, control libraries, incident reporting, internal controls and assurance. This allows the organisation to use familiar playbooks, thresholds, owners, mitigation plans and escalation routes, while still recognising AI’s specific characteristics.

At the same time, ERM for AI cannot be purely backward‑looking. If boards rely only on traditional templates and historic benchmarks, they risk underestimating emerging threats such as systemic bias, model hallucinations, AI‑enabled misinformation or escalating energy use. AI risk management needs to be more dynamic: incorporating horizon‑scanning, scenario planning and periodic “deep dives” on high‑impact AI systems so that new risk types and ESG implications are surfaced and addressed, not just checked off against today’s legal obligations.

Use the Three Lines of Defence for AI Assurance

A mature AI governance system should build on the familiar three lines of defence model. This clarifies who owns AI risk day to day, who provides expert oversight and who offers truly independent assurance to the board.

In the first line, the teams that build, procure or use AI systems own the risks that arise from them. They are responsible for conducting basic risk and impact assessments, completing governance checks and operating within defined guardrails, often supported by local compliance leads or responsible‑AI managers.

The second line is formed by specialist oversight functions, for example, risk, compliance, legal, data governance or an AI oversight committee. Their role is to set AI policies and standards, monitor adherence, challenge first‑line decisions on higher‑risk systems and coordinate responses to emerging regulatory and ESG expectations.

The third line is internal audit, sometimes complemented by external assurance providers for high‑risk use cases. It provides independent, objective assurance to the board on whether the overall AI governance framework is effective in practice, testing controls, reviewing documentation, and identifying gaps or weaknesses across the first two lines.

By separating responsibilities in this way, organisations reduce the risk of internal bias, close coverage gaps and create a system of checks and balances that builds trust in AI deployment with regulators, investors and other stakeholders.

Put Practical AI Guardrails in Place

Guardrails are the practical tools and processes that keep AI systems within safe and acceptable bounds as they move into real‑world use. They turn high‑level principles into day‑to‑day constraints and prompts that help teams make better decisions under pressure.

These guardrails span technical, organisational and procedural controls.

Technical guardrails include explainability standards, model version control, documented validation procedures and clear limits on where and how models can be used.

Organisational guardrails embed risk assessments and impact reviews into project plans, add structured review checkpoints, and define escalation routes when something looks off.

Procedural safeguards cover staff training, whistleblower or speak‑up channels, and audit logs that track model behaviour over time.

Guardrails will never remove all risk, but they make risks visible, traceable and easier to manage. They also create a shared understanding of expectations across functions, which is essential when AI is used in high‑stakes or cross‑functional settings such as credit decisions, safety‑critical operations, ESG disclosures or workforce management.

Stress‑Test AI with Red Teaming

Red teaming is becoming a core practice for serious AI governance. Borrowed from cybersecurity, it involves deliberately simulating attacks, errors and unintended uses to expose vulnerabilities before systems are deployed at scale. Instead of assuming a model will behave as expected, you pay people to try to break it, so you learn in a controlled environment rather than in the headlines.

A good red‑teaming exercise might probe how a model responds to adversarial prompts, how it behaves when datasets are subtly manipulated, or whether it can be nudged into generating harmful or misleading outputs. It can also reveal security gaps that normal testing misses, such as ways to bypass controls, exfiltrate sensitive data or produce deceptive content. For generative AI, autonomous agents and AI used in ESG disclosures or customer decisions, this kind of stress‑testing is especially important.

Organisations can build red‑teaming capability in‑house, use specialist external partners or pursue a hybrid model. External experts bring fresh attack mindsets and up‑to‑date knowledge of emerging threats, but relying on them alone can weaken internal capability and integration into day‑to‑day processes. Whatever the resourcing model, the goal is the same: AI systems that are safe, traceable and aligned with your values and obligations, tested under pressure before regulators, customers or affected communities do it for you.

When red teaming is integrated with your risk framework, guardrails and three‑lines‑of‑defence model, it becomes a powerful assurance tool rather than a one‑off experiment. It reinforces a culture of scepticism and preparedness, helps boards see where high‑impact AI systems are fragile, and supports more credible ESG and risk disclosures.

Conclusion

AI has moved from the margins of innovation decks into the heart of board responsibility. It now influences how companies allocate capital, engage customers, manage supply chains and report on ESG – and, increasingly, how regulators and investors judge governance quality. That makes AI governance not just a technical concern, but a strategic, risk and sustainability imperative.

For boards and sustainability‑focused executives, the challenge is to turn a complex, fast‑moving topic into a manageable agenda. The six imperatives you have seen, from agreeing your AI stance to building director fluency, provide a common baseline for any organisation, while the stance‑based archetypes help you tailor your challenger role to where your company really is on the AI journey. Embedding AI into your risk framework, assurance model, guardrails and red‑teaming practice then ensures that governance is lived through existing systems, not bolted on as a new bureaucracy.

Getting this right will not make AI risk disappear, and it will not guarantee every AI project creates value. But it will give your board a coherent way to see where AI helps and hurts, to insist on ESG‑aware safeguards, and to steer management towards uses of AI that genuinely support long‑term resilience and sustainable value creation. If your organisation needs help designing or stress‑testing that governance approach, now is the moment to do it – before AI is so deeply embedded that your only option is to retrofit controls around systems you no longer fully understand.