How Sustainability Leaders Can Customize AI and Launch High-Value Use Cases

Artificial intelligence is moving from experimentation to execution, and sustainability leaders are under growing pressure to apply it in practical ways. Across the US, Canada, Singapore, and the Middle East, organizations are exploring AI for ESG reporting, climate risk analysis, supply chain transparency, carbon accounting, and sustainability decision-making.

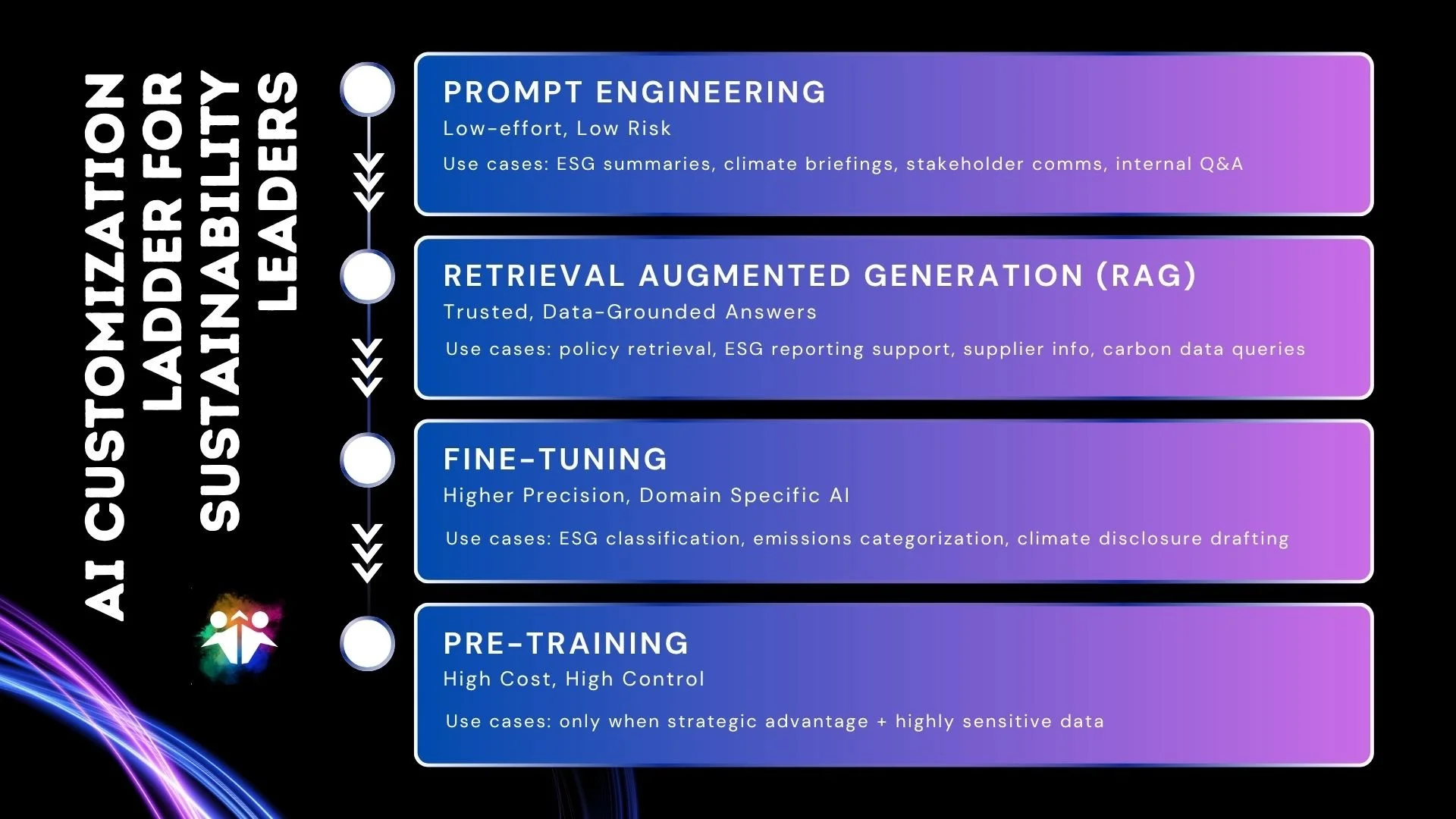

But not every organization needs the same level of AI sophistication. The most effective approach depends on the complexity of the use case, the quality of your data, and the maturity of your organization. For most sustainability teams, the smartest path is to start with manageable customisation, prove value quickly, and scale only when the business case is clear.

How to Customize AI for Sustainability Use Cases

Most organizations do not need to start with advanced model training. For many sustainability use cases, the best results come from simpler forms of AI customization such as prompt engineering, retrieval-augmented generation, and targeted fine-tuning.

The right method depends on what you are trying to achieve. Some use cases only require better prompting. Others need access to trusted data. More advanced use cases may justify fine-tuning. Very few organizations need to pre-train a model from the ground up.

Prompt Engineering for Sustainability Teams

Prompt engineering is often the easiest and most immediate way to tailor an LLM to your needs. Instead of altering the model itself, you shape the instructions it receives so it produces more relevant, structured, and consistent outputs from generative AI tools and AI assistants. For many organizations, it is the most practical starting point because it delivers value quickly without requiring heavy technical investments or major changes to infrastructure.

For sustainability teams, this can quickly improve ESG summaries, climate briefing notes, stakeholder communications, carbon reporting drafts, and internal Q&A. It is low cost, low risk, and a strong entry point for teams that want to explore AI without committing to major infrastructure or data investment.

Retrieval-Augmented Generation for Trusted AI Responses

The next step is retrieval-augmented generation, or RAG. This approach combines the model’s language ability with trusted internal sources, such as sustainability policies, ESG reports, carbon data, supplier records, and approved knowledge bases.

RAG is especially useful when accuracy, traceability, and context matter. Rather than relying only on pre-trained knowledge, the model can search approved sources and generate answers grounded in current information, improving traceability, auditability, and confidence in the output. For sustainability leaders, that makes it particularly valuable for reporting support, policy retrieval, and operational decision support.

When prompt engineering and RAG are combined, you get something closer to a custom AI assistant or custom GPT experience.

Fine-tuning LLMs for Sustainability Use Cases

Fine-tuning is the next level of AI customization and involves taking a pre-trained model, such as Llama or Mistral, and continuing its training on your own data.

For sustainability use cases, fine-tuning can improve performance on emissions categorization, climate disclosure drafting, and sustainability knowledge extraction. It can also help the model adopt a more consistent tone and better reflect your organization’s terminology.

However, fine-tuning requires discipline and a secure environment for training, with strong controls around data access, privacy, and governance, supported by clear AI guardrails for sustainability leaders. It is best done iteratively, with validation after each cycle so you can monitor model drift, reduce hallucinations, and correct errors before wider deployment. If the training data is inconsistent or poorly controlled, the model may reproduce those weaknesses at scale.

Pre-Training a Model for Sustainability

At the highest end of complexity is pre-training a model from scratch, which is by far the most resource-intensive approach to AI customisation. It requires very large volumes of high-quality data, advanced computing infrastructure, and a specialist AI team capable of designing, training, and maintaining the model over time. For teams concerned with the energy impact of large-scale training, adopting a net-positive AI energy framework can help balance computational efficiency with climate goals.

In practice, this is a major investment, both financially and operationally, and it is only justified in cases where an organisation needs deep control over the model’s behavior, architecture, and outputs.

In the end, customising a large language model is about choosing the right level of sophistication for the problem you are trying to solve. The most effective organisations begin with prompt engineering, add RAG when they need grounded and trusted responses, move to fine-tuning when precision becomes essential, and only consider pre-training when the business case is truly compelling. Knowing where your use case sits on that spectrum allows you to invest with more confidence, reduce unnecessary complexity, and get better value from AI at every stage.

AI Customization Ladder for Sustainability Leaders

How to Launch an AI Use Case for Sustainability

Once you have chosen the right level of customisation, the next step is to move from concept to execution. For sustainability leaders, the goal is not to build AI for its own sake. It is to solve a real business problem in a way that is secure, practical, and measurable.

That might mean accelerating ESG reporting, improving carbon data workflows, supporting supply chain due diligence, automating sustainability knowledge search, or strengthening climate risk analysis.

① Choosing the Right Foundation Model

Start by selecting the right foundation model for the job. Many organisations begin with a publicly available model or an open-source option such as Mistral or Llama, depending on security requirements, licensing terms, and deployment preferences.

The best model is not always the largest one. It is the one that fits the use case, the risk profile, and the organisation’s operating environment.

② Preparing Sustainability Data for AI

Next, prepare the data the model will rely on. In sustainability, that may include ESG reports, supplier questionnaires, carbon inventories, environmental disclosures, policy documents, or internal guidance materials.

This data should be cleaned, standardized, and formatted consistently. Good data preparation is often the difference between a pilot that demonstrates value and one that fails to gain traction.

③ Cleaning and Labelling Data for AI Training

Before training or deployment, the data should be reviewed for quality, consistency, and bias. That may include removing duplicates, anonymizing sensitive information, correcting errors, and involving subject matter experts to validate key labels or outputs.

This step is particularly important in sustainability, where terminology can vary across frameworks, jurisdictions, and business units. If the data is unreliable, the model will be too.

④ Fine-tuning AI Models in a Secure Environment

If the use case requires more precision, fine-tuning may be the right next step. Parameter-efficient methods such as LoRA or QLoRA can reduce compute requirements while still adapting the model to your domain.

LoRA (Low-Rank Adaptation) and QLoRA (Quantized LoRA) are techniques to efficiently train large AI models (LLMs) on smaller, consumer hardware, rather than expensive enterprise computers. LoRA updates only a tiny fraction of model weights, while QLoRA goes further by compressing the model to 4-bit, making it the most memory-efficient approach.

Essentially, QLoRA is LoRA that shred weight by hitting the gym. It takes LoRA further by reducing the memory needed to store the original base model.

This can help the model better interpret sustainability language, structure reporting outputs, or generate more relevant responses for internal users. For high-value workflows, fine-tuning can be a powerful way to improve consistency and performance.

Training your model also demands a highly secure environment for training, with strong controls around data access, privacy, and governance throughout the process. This may involve performing training in iterations, so that you can adjust for model drift and hallucinations. And evaluating with a validation set to track performance across tasks like ESG data extraction, emissions categorization and climate disclosure drafting

For most sustainability organisations, this level of investment is unnecessary. It makes sense only where there is a strong strategic advantage, highly sensitive data, or a need for full control over the model architecture and behavior.

⑤ Testing, Validating and Deploying AI Safely

Before launch, test the model on unseen examples and measure performance against clear success criteria. For sustainability use cases, that might include checking accuracy in ESG data extraction (for example, in ESG Reporting Platforms Using AI such as Watershed), consistency in emissions categorization, or quality in draft disclosures and summaries.

Once the model performs reliably, deploy it in a secure environment with human oversight. A sandboxed workflow, approval process, and ongoing monitoring will help reduce risk and keep the system aligned with business needs as data, regulations, and stakeholder expectations evolve.

What High-Performing Sustainability Teams do Differently

The sustainability leaders getting the best results from AI are not necessarily the ones using the most advanced models. They are the ones making smart choices about scope, governance, and implementation.

They start small, focus on one high-value workflow, and build from there. They make sure the use case is tied to a real business need. They treat data as a strategic asset. And they put governance in place early, rather than trying to add it later. For some organisations, this includes piloting AI agents in sustainability operations that can take on repeatable tasks and orchestrate complex workflows

That approach matters whether you are operating in North America, Southeast Asia, or the Middle East, because the pressure to improve ESG performance, increase transparency, and do more with less is only growing.

Closing Thought

AI can be a practical advantage for sustainability leaders, but only when it is applied with purpose and governed well. The organisations creating the most value are not chasing novelty; they are building AI capabilities that improve decision-making, reduce manual work, and strengthen ESG, climate, and supply chain performance.